Data Engineering Books

5 Books for Data Engineers

Building foundations and framing your viewpoint towards data engineering

About 3 years ago, I started my IT career as a Data Engineer and tried to find day-to-day solutions and answers surrounding the data platform. And, I always hope that there are some resources like the university textbooks in this field and look for.

In this article, I will share the 5 books that help me to make a concrete overview of Data Engineering so that I could go back and check whenever I’m suspicious of my point.

First, cause there are many, I would address a frame that could help you to choose what is best for you and share some thoughts on each.

Where do I start?

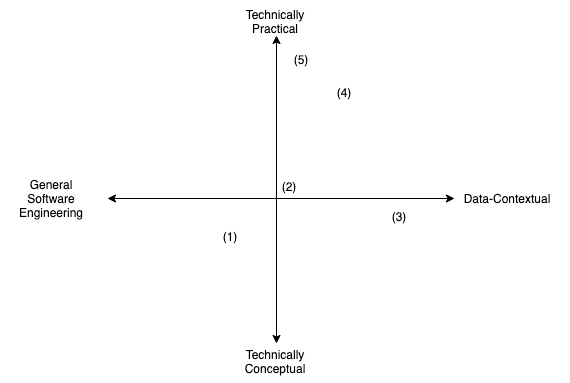

I devise 2 factors that we can use when we draw the chart and locate each book on it.

One is about a ‘Technical Conceptuality — Practicality’ that means whether it deals with general implementation concept or specific implementation(or API), and the other one is ‘Generality vs Data Contextuality’.

Here I plot the chart following the two factors:

Here’s what and why:

- (1) I Hearts Logs by Jay Kreps: It explains the role of logs in the distributed environment. Relatively short but I could grasp the core concept of a data system (Database or Distributed Data System like Kafka). I ran into the concept before reading it on the LinkedIn blog page.

- (2) Designing Data-Intensive Applications by Martin Kleppmann: It delivers core concepts of the data system like data model, distributed system(e.g. two-phase lock), and batch & streaming of the data processing.

- (3) Rebuilding Reliable Data Pipelines Through Modern Tools by Ted Malaska: If most of your experience is out of the data things, this book would be a good choice to start to understand what’s going on in the data field. Things like stakeholders in a data environment, data pipelining, common issues(lots of them are relatively data-environment-contextual) are covered.

- (4) Expert Hadoop Administration by Sam R. Alapati: There’s also a good Oreilly book on Hadoop, but I choose it because I actually re-read it again and again for the last 1 year whenever I need some thorough answer(What kind of configurations do I need for the server of HDFS Namenode? or Where should I check to monitor HDFS ?).

- (5) Architecting Modern Data Platform by Jan Kunigk, Ian Buss, Paul Wilkinson, Lars George: A good book with fantastic graphs and images. Compared to (4), it more focuses on the external Hadoop services(Server RAM, CPU specifications, or Network Band Requirements, etc).

Main Contents of each book

Some are short, but some are demanding to start. So, I share some thoughts on what was influential in each one to help you start with what fits you.

I Hearts Logs (~ 50 Pages)

Author Jay Kreps, who is one of the developers of Kafka and Samza, says that the logs, that we usually perceive in the form of a web server like Nginx, takes the central role in the database and distributed system and it has many benefits in log-centric designs and consensus compared to other alternatives.

And, he addresses some practical examples: ‘Data Integration’, ‘Real-Time data processing’, and ‘Distributed System Design’.

One of them is the role of the logs as a ‘Single source of truth’ in a form of integrated logs between many ‘Write’ systems and ‘Read’ systems disabling the coupling of the two.

I put it on the first because you could get in the other distributed data system with the viewpoint of Jay Kreps that simplify the essential architecture of them.

Designing Data-Intensive Applications (~ 550 Pages)

Many of you definitely heard of it before. It covers the core concepts and common implementation of them, from the early days of the data system(RDB, NoSQL) to the distributed environment(Hadoop and others).

The core concepts, which usually trigger you to doubt your understanding of them, are thoroughly handled: Data Model, Data Structure, Encoding and Schema Evolution of Database or Replication, Partitioning, Transaction, Main Issues of the distributed system.

It also gives you a perspective rather than ‘how to’ on Hadoop with the Lambda Architecture.

Personally, I frequently go back to this and remind myself whenever I feel conceptually-polluted.

Rebuilding Reliable Data Pipelines Through Modern Tools (~ 100 Pages)

This book, which is free on the Unravel site, teaches you who are the stakeholders in the data environment and what the landscape of data ETL(Extract, Transform, Load) looks like.

It uses many simple metaphors but practical enough to make you ‘feel’ how it would be like to work as a data engineer in the environment described in the book.

There’s a comprehensive book written by the same author, Ted Malaska, but I think this concise book would be sufficient for your knowledge base and then you could find your way by googling.

Expert Hadoop Administration (~ 750 Pages)

For the professionals who struggle with Hadoop services, it is hard to find valuable resources to solve practical problems including HDFS, Yarn, Oozie, Sqoop, etc.

If you faced questions like ‘What kind of server configurations and specifications do we need when we install HDFS?’, ‘How to optimize Yarn memory and CPU usage?’, this long and detailed book would be a good reference that you could stop by at first.

If you feel it’s a bit long, then you can only finish the HDFS, Yarn, Spark architecture part(~ 351 Pages), and get back when you need more.

Architecting Modern Data Platforms (~ 600 Pages)

As you guess from the above chart that I draw, this book is filled with technical resources surrounding Hadoop stacks when you build a scaled data center.

Whereas the former one(4) focuses on the feature of Hadoop services, this teaches you service-external topics: specifications of server, network, and OS for the Hadoop environment, and virtualization, etc.

You’ll find wonderful images that could be registered with and frame your viewpoint on how the Hadoop services work with the underlying infrastructure.

For those who taste a bit of the Hadoop stack and want to know more about ‘Does the vCores in Yarn applications correspond to a physical core or virtual core(in the virtual env)?’ and ‘How the file system driver(etx3, ext4) or page cache setting affect the HDFS performance?’, this is an invaluable resource to feed your curiosity.